Benford’s Law Basics

November 11, 2019

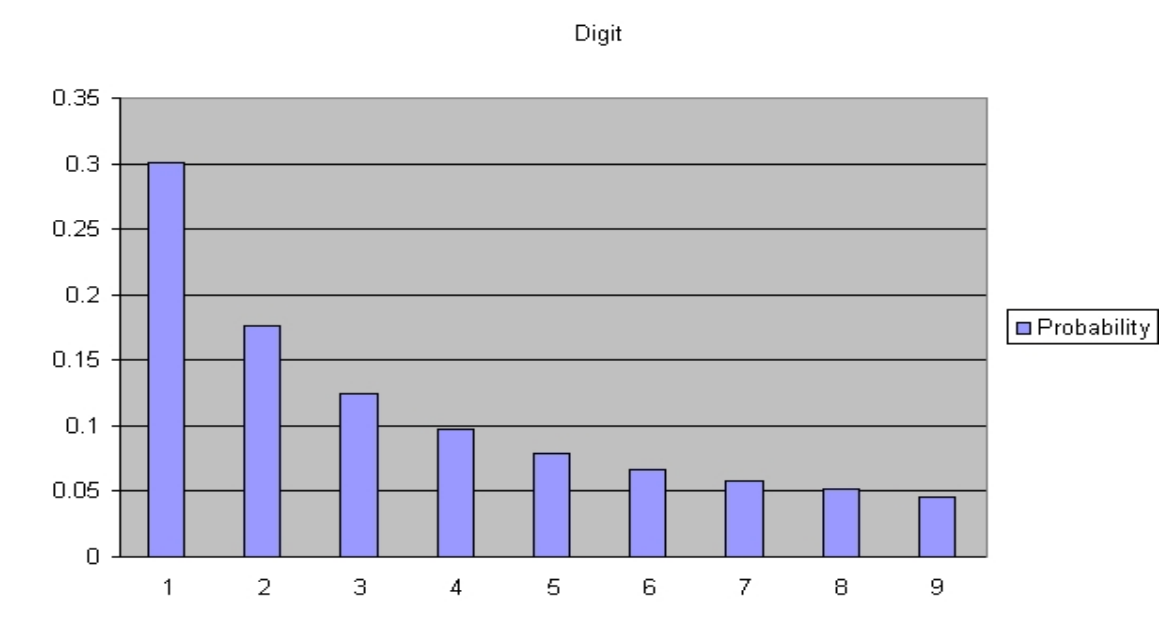

It’s one of the more surprising laws of nature: More numbers start with 1 than any other digit.

I’ve come across Benford’s Law a few times before, but today was the first time I fully understood it.

It’s amazingly unintuitive. Benford’s Law states that in large enough, naturally occurring sets of numbers, more numbers will start with smaller digits than higher ones. So in any dataset that is pretty big, there will be more numbers beginning with 1 than 2, more beginning with 2 than 3, and so on. In big datasets, Benford’s Law predicts numbers whose leading digit is 1 occur around 6 times more often than numbers beginning with 9.

Benford’s Law is incredibly stable across a wide range of natural phenomena, provided they span several orders of magnitude. Even wilder, it is scale invariant. If you change the units you use to make your measurements, the values of the measurements change, but Benford’s Law stays the same.

For the longest time, this seemed crazy to me. Why would nature be biased towards numbers beginning with low digits? Surely, each digit should be uniformly distributed, and sixes and sevens should be just as common as eights and nines. Today, I came across Benford’s Law again, and a penny dropped.

Benford’s Law is easier to understand if you change base. If we collected all our records in binary, Benford’s Law is trivial. Apart from zero, every binary number begins with 1. So for any dataset, almost 100% of the numbers have 1 as their leading digit.

Now imagine we used base-3 instead. For reference, here are the numbers 1 to 20 in base-3: {1, 2, 10, 11, 12, 20, 21, 22, 100, 101, 102, 110, 111, 112, 120, 121, 122, 200, 201, 202}.

Because we’re in base-3, the numbers 1-to-20 already span 3 orders of magnitude. We can start to see Benford’s Law emerge: There are only seven numbers beginning with 2 in our sample; there are 13 starting with 1.

Every time you go up an order of magnitude, you start counting from the smallest digit. This means you have more opportunities to count ones than twos. If your data span several orders of magnitude, the biggest datapoints are much more likely to begin with 1 than 2, because you’d have to jump past all the numbers beginning with 1 before you arrive at numbers beginning with 2. Therefore, you’ll count more numbers beginning with 1 than 2. This same argument holds for higher bases.

Unless your data fit perfectly within an order of magnitude range (e.g between 1 and 999 exactly) Benford’s Law holds. And even if that were the case, changing the scale, the units you measure your data in, would shift your data away from a more uniform distribution and into the Benford regime.

Benford’s Law is much less severe in higher bases. As an extreme example, imagine we used base-1-million. We would use a different character to record every number written as 1 to 999,999 in base-10. Unless our dataset spans many powers of a million, we would get a uniform digit distribution - each base-1-million character would be used once or not at all.

Benford’s Law applies to electricity bills, street addresses, stock prices and many more. It is especially applicable when data is generated by a power law. There are some things it doesn’t apply to, though — most notably, numbers set by psychological thresholds (buy now for only $9.99!).

I wonder if you could make money in the markets using Benford’s Law. Binary options allow you to bet on whether a stock will rise or fall, but not on the magnitude of its rise. If there were options which allowed you to bet on a security price’s leading digit, you might be able to make money with a Benford-like strategy.

Whether buying stocks, or counting Facebook friends, Benford’s Law works the same. The solitary digit tends to stay ahead of the game.